Writes you a job description.

a RunLobster company

Managed OpenClaw Hosting, one click deploy.

Each agent runs on its own cloud computer, completely separate from yours. It has a browser, apps, terminal, and file system: everything it needs to ship real work on your behalf — 24/7.

Reads, categorizes, and drafts replies to your email on schedule

Calendar summary, priority emails, and task updates delivered each morning

Vendor follow-ups, meeting confirmations, and document requests on autopilot

Analyzes top-ranking pages and competitor content strategies

Scheduled editorial calendar — a new post every week

Tracks positions and rewrites underperforming content

Opens prospect websites in a real browser for genuine personalization

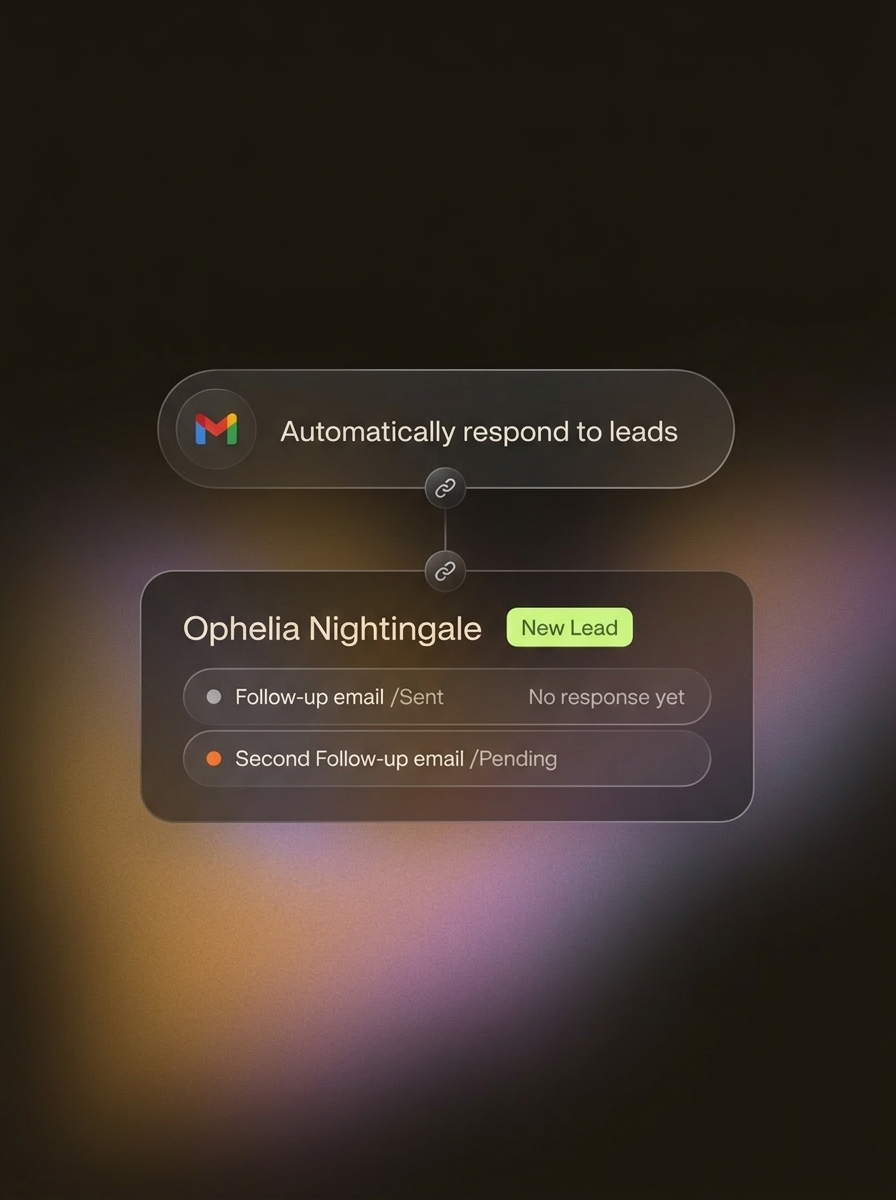

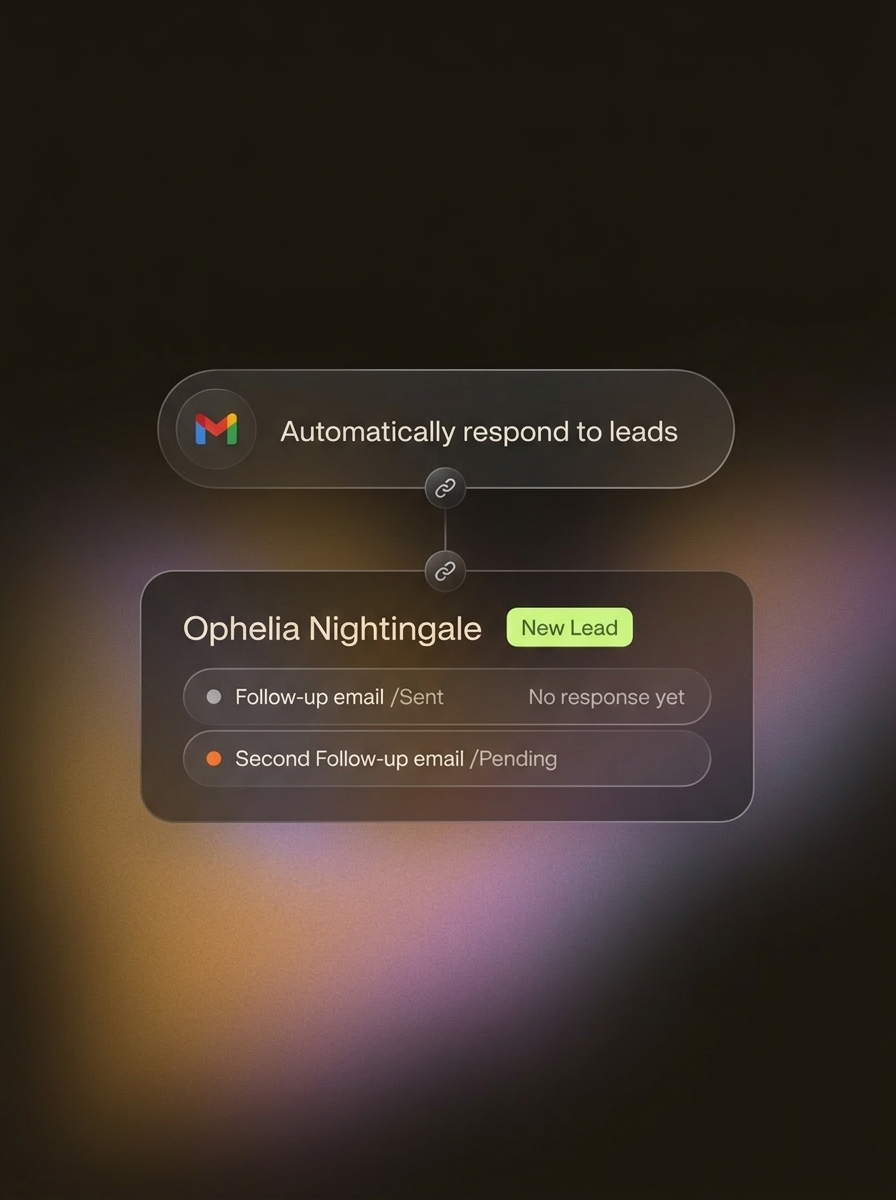

Multi-day follow-ups on a schedule that adapt to engagement

Pipeline stages, notes, and meeting briefs — all handled

Deploys and maintains apps at a public URL — pushes updates and monitors uptime

Any package, any language, full terminal access — a persistent dev machine

Cron health checks and Slack alerts while you sleep

Runs end-to-end regression suites against production automatically

Catches regressions before users do with visual evidence

Maintains and evolves test scripts — learns from false positives

Text, images, and video scripts across all platforms on a calendar

Tracks metrics, alerts on viral content or negative sentiment

Maintains brand voice with personality — consistent across platforms

Handles tickets on Slack, Discord, email, web chat, and Telegram simultaneously

Tracks patterns, identifies common issues, and writes documentation

Complex issues go to humans via Slack or email with ticket history attached

Updates listings and pricing across platforms via browser

Persistent tracking with alerts and auto-adjustments

Stock monitoring, restock alerts, and order processing

Pings endpoints, checks SSL, monitors disk and CPU around the clock

Reads logs, diagnoses root cause, applies known fixes automatically

Maintains runbooks and journals what worked — gets faster over time

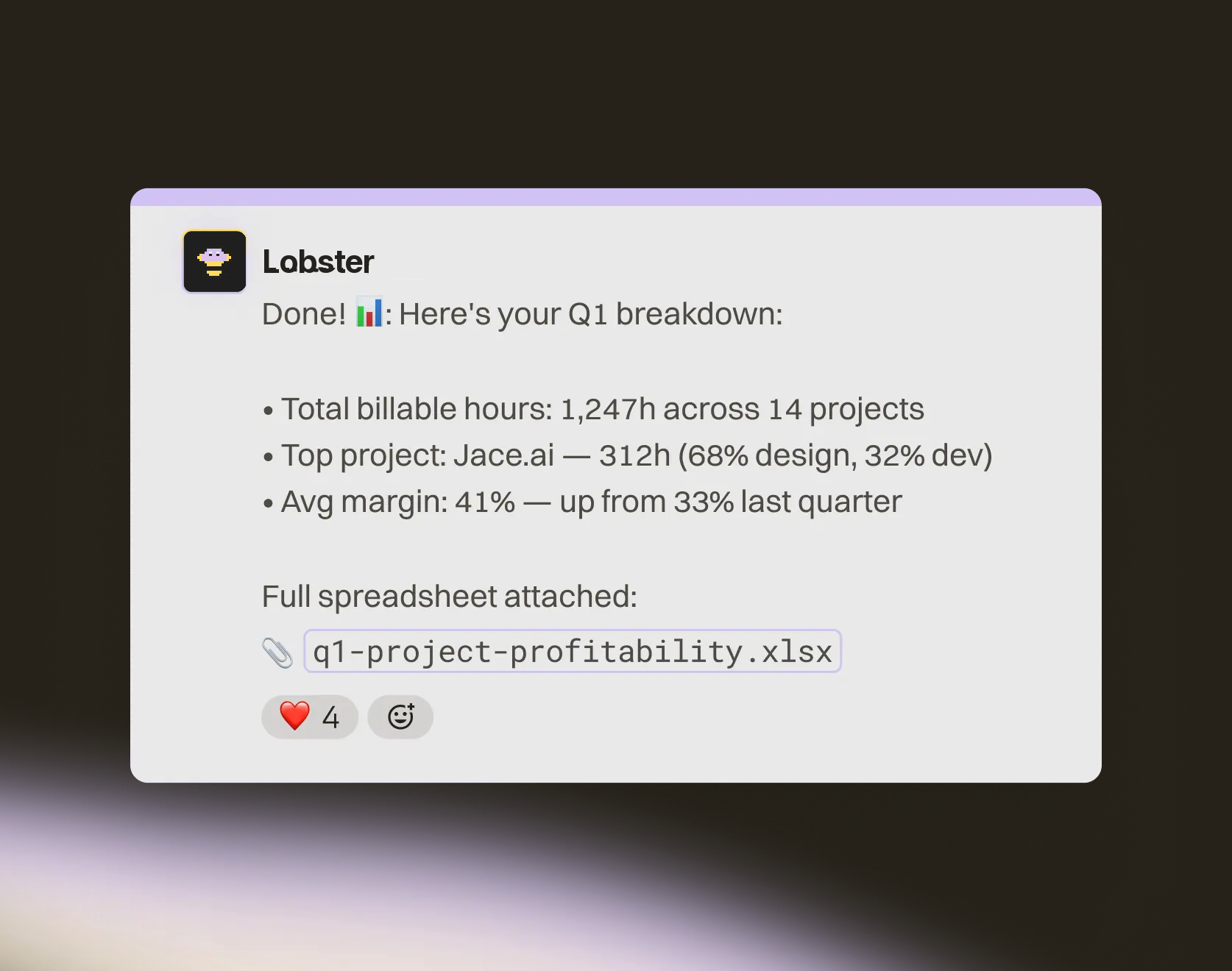

Real data science stack, not sandboxed snippets

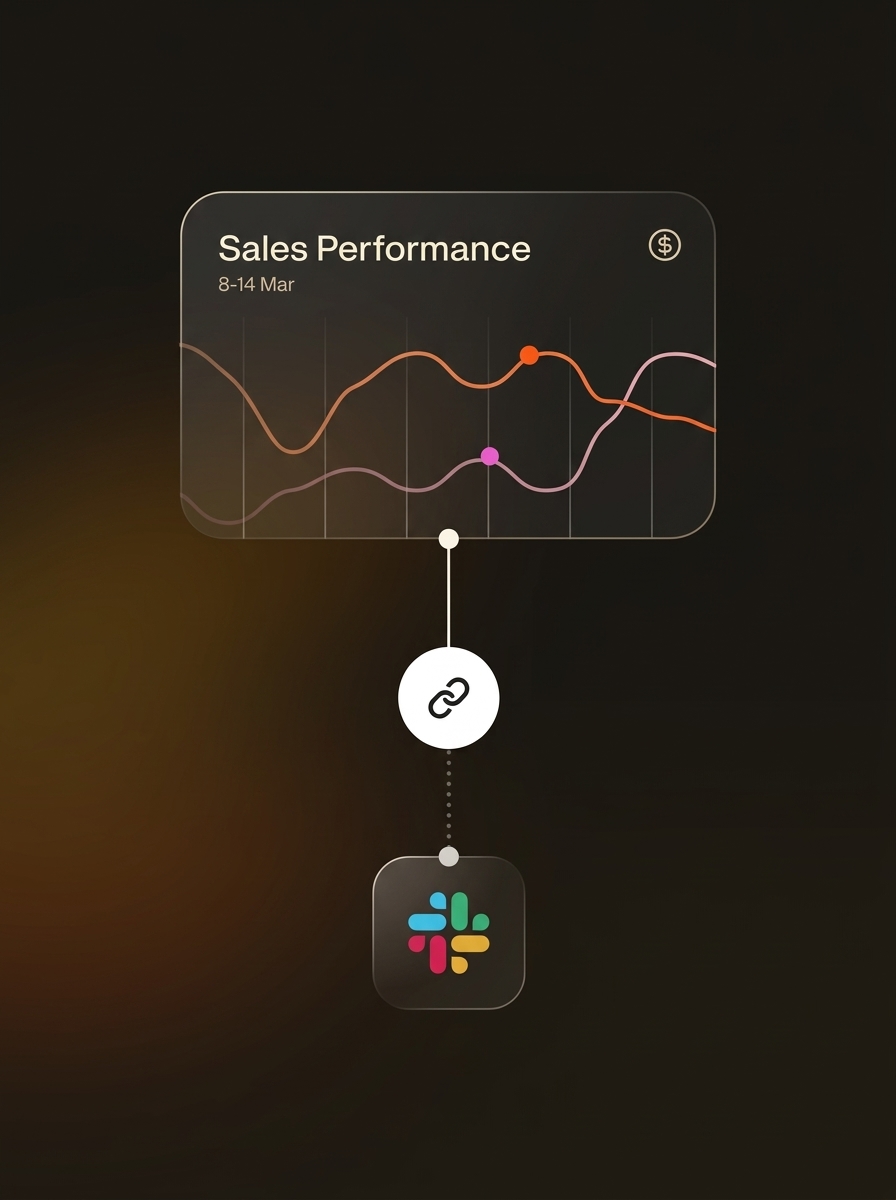

Interactive charts your team and investors can bookmark

Weekly PDFs, daily metrics, monthly board decks

Browser access to financial tools beyond API limitations

Matches transactions, categorizes expenses, flags anomalies

Real documents saved and sent via email on schedule

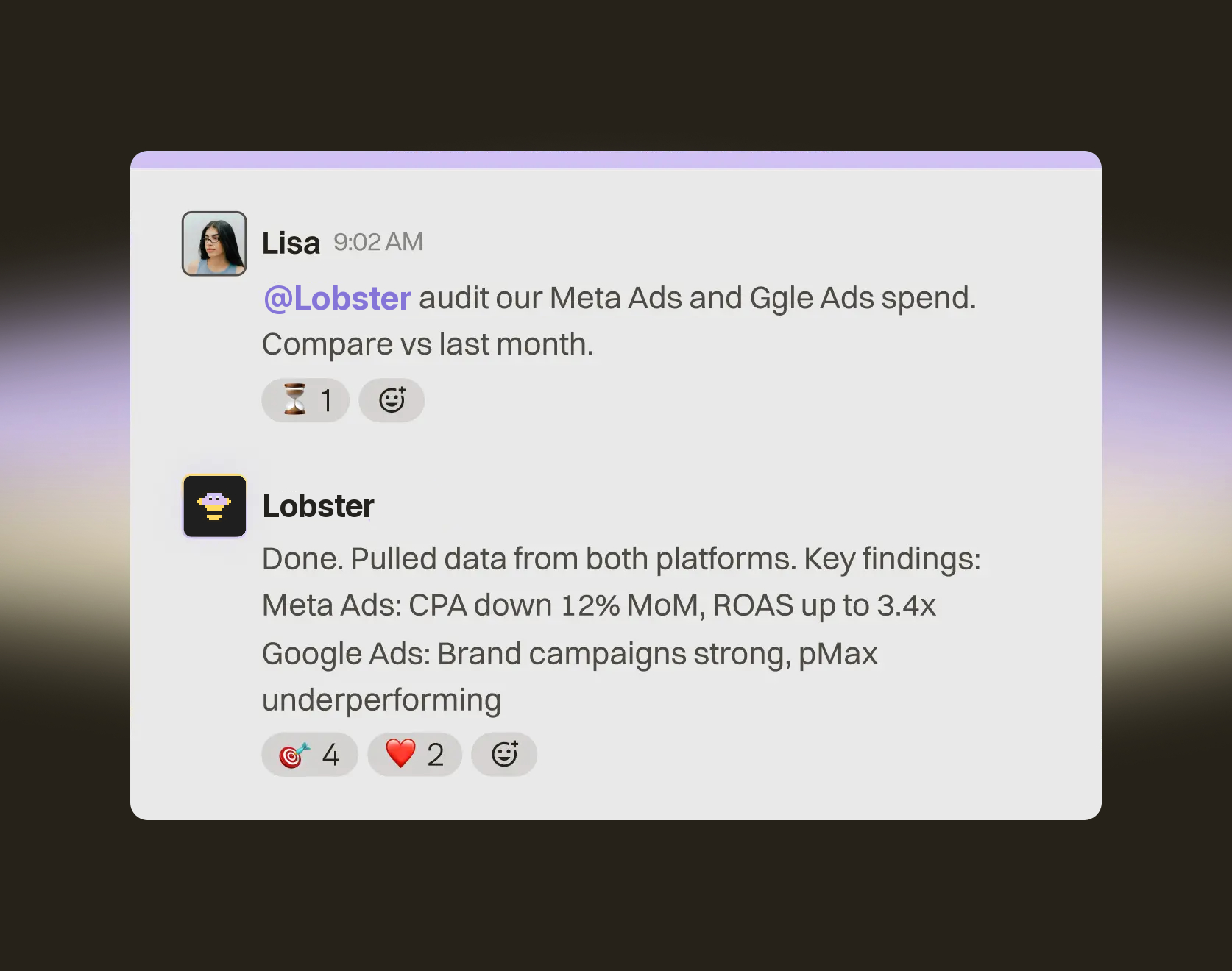

Checks Google Ads, Meta Ads — pauses underperformers, adjusts budgets

Drip campaigns, newsletters, re-engagement flows — hands-free

Pulls from GA4, PostHog, ad platforms into one performance summary

Monitors Jira, Linear, Asana — identifies blockers and overdue items

Auto-generated summaries delivered to Slack and email on schedule

Proactive messages to team members with approaching deadlines

Alerts instantly when new listings match buyer criteria

Tracks prospects, follow-up dates, showing notes in a persistent database

Live listing pages with photos, descriptions, and contact forms at a URL

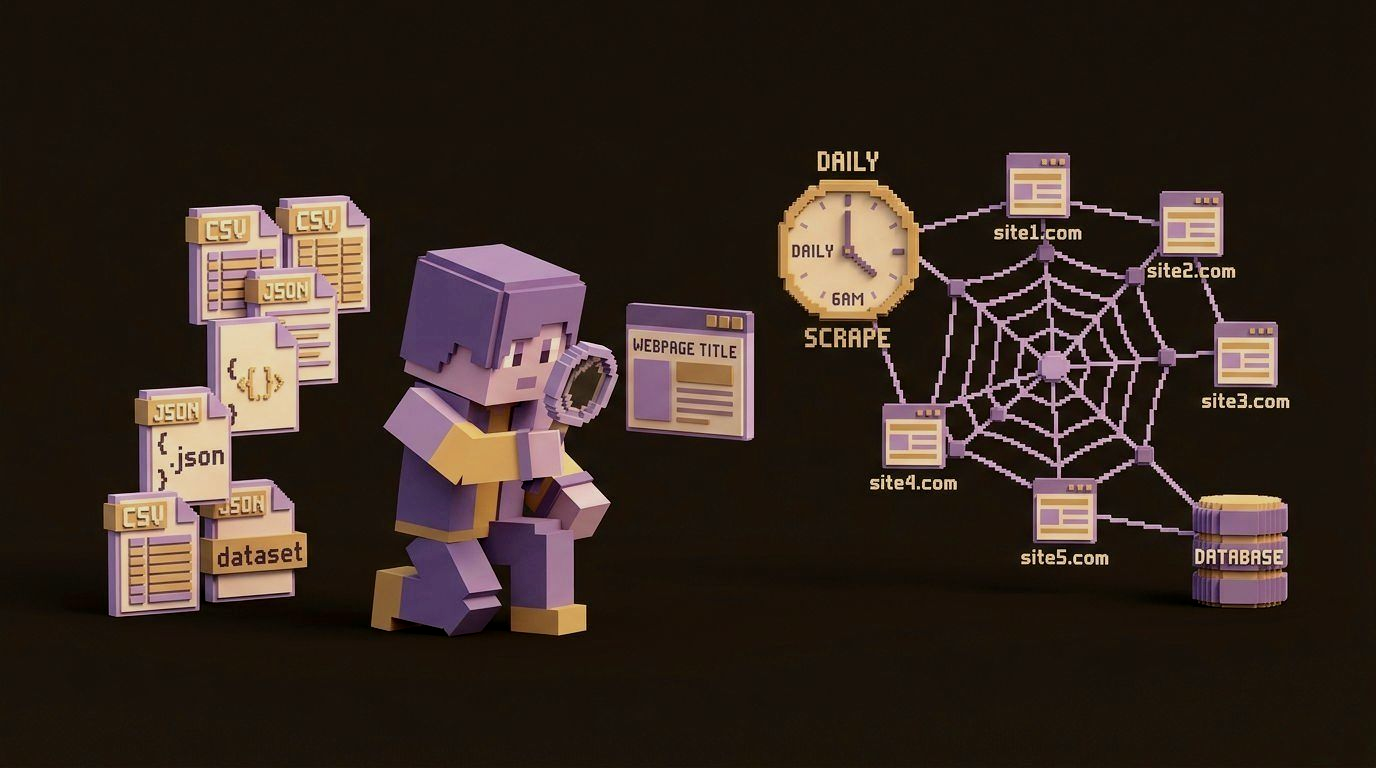

Collects data from any website on schedule — handles pagination, logins, JavaScript

Stores CSV, JSON, SQLite files on disk — builds datasets over weeks

Processed data sent via email, Slack, or hosted on a live dashboard

Logs into legacy tools and does the work for you

Pull data here, fill a form there, update a third system

Reconciliation, filings, onboarding — hands-free

Browser checks for pricing, feature, and content changes

Multi-channel notifications the moment something changes

Snapshots saved to filesystem for trend analysis

Browses actual profiles and portfolios in a real browser

Persistent candidate database that grows with every search

Personalized messages, scoring, and calendar coordination

Real Deliverables

PDFs, spreadsheets, slide decks, videos, full-stack web apps — if a person can work on it with a laptop, Lobster can too.

3,000+ Integrations

One-click connect to Stripe, HubSpot, Google Ads, Notion, and thousands more. You control exactly what Lobster can read, write, and access.

Deep Memory

Every conversation makes Lobster smarter about your business. It remembers what worked, what didn’t, and how you like things done.

Never repeat yourself

Open Source Core

Built on OpenClaw — fully open source. Unlike Devin or ChatGPT Agents, you can clone the repo, self-host anywhere, and take your data with you.

No vendor lock-in

Built-In Scheduler

Every other AI waits to be asked. Lobster runs on its own cadence — morning briefings, overnight checks, 3am auto-repairs — and only reaches out when something needs you.

Always running

Dedicated Compute

Every customer gets their own isolated container — its own file system, memory, and deployed apps. A hard technical boundary, not a privacy policy.

Your data, only yours

Just @Lobster.

Talk Anywhere.

Slack, Discord, iMessage, email, or web chat. Message your agent wherever you already work — it responds in real time, every channel, one brain.

Slack, Discord & iMessage

DM your agent like a teammate. It reads, replies, and takes action — right inside the apps you already use.

Proactive by Default

Lobster doesn’t wait to be asked. It messages you when something needs attention, when it finishes a task, or when it’s stuck and needs a decision.

Your Whole Team

Add Lobster to a Slack or Discord channel and everyone can talk to it. One agent, shared context, zero per-seat fees.

iMessage groups coming soon

Your inbox, handled

“Scan my inbox overnight. Flag anything urgent, draft replies to the easy ones, and send me a Slack summary at 7am with what you handled and what still needs me.”

Leads that never go cold

“When a new lead comes in, send a personalized follow-up within 10 minutes. No response in 3 days? Follow up again with a different angle.”

Travel, end to end

“For our planned team offsite in NYC in May, alert me when flight prices drop, and keep an eye out for venues happening May 7–18. Send updates to Slack.”

Your personal life, organized

“I have dinner with a client on Friday but no reservation. Find well-reviewed restaurants and book a table for 2 at 8pm, then update my calendar.”

Your business, on autopilot

“Every Friday at 5pm, pull this week's sales numbers, support tickets, and marketing metrics. Summarize trends, flag anything off, and send the report to Slack.”

Your calendar, optimized

“Every morning, check my calendar. If I have back-to-back meetings with no break, move something or block 30 minutes for lunch. Send me the updated plan.”

Your inbox, handled

“Scan my inbox overnight. Flag anything urgent, draft replies to the easy ones, and send me a Slack summary at 7am with what you handled and what still needs me.”

Leads that never go cold

“When a new lead comes in, send a personalized follow-up within 10 minutes. No response in 3 days? Follow up again with a different angle.”

Travel, end to end

“For our planned team offsite in NYC in May, alert me when flight prices drop, and keep an eye out for venues happening May 7–18. Send updates to Slack.”

Your personal life, organized

“I have dinner with a client on Friday but no reservation. Find well-reviewed restaurants and book a table for 2 at 8pm, then update my calendar.”

Your business, on autopilot

“Every Friday at 5pm, pull this week's sales numbers, support tickets, and marketing metrics. Summarize trends, flag anything off, and send the report to Slack.”

Your calendar, optimized

“Every morning, check my calendar. If I have back-to-back meetings with no break, move something or block 30 minutes for lunch. Send me the updated plan.”

Hiring

Posts it, screens applicants, books the interviews.

Meeting Follow-ups

Summarizes your meetings.

Creates the tasks, sends the follow-ups, updates the CRM.

Workflow Automation

Follows rules you write.

Figures out what needs automating and does it.

Building Tools

Writes the code. You figure out the rest.

Builds it, ships it, sends you the link.

HOW IT WORKS

Set it up once.

It works forever.

/01

Connect

Add Lobster to Slack, Discord, or iMessage. Connect your tools in one click — Stripe, Notion, Google Ads, and 3,000 more. You’re up in minutes.

/02

Ask

Talk to Lobster like a colleague. "Pull our Meta Ads data and compare vs. last month." "Create a Linear issue for the pricing update." "Build me a revenue dashboard."

/03

Lobster delivers

Lobster queries your tools, analyzes data, and delivers real outputs: PDFs, spreadsheets, web apps, code. It also schedules recurring tasks and proposes automations you didn't think to ask for.

The internet

loves Lobster

FAQ

Yes. Lobster is free to use. Sign up, connect your tools, and start working with your AI employee. No credit card required.

Ready to automate?

Lobster is free. Launch your AI employee in 2 minutes.

Every feature. Every integration. Every role. No credit card. No catch.